RDD Cycle

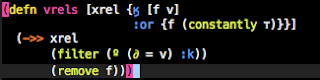

First, I'd like to address the TLA RDD. I use the term RDD because I'm relying on the REPL to drive my development. More specifically, when I'm developing, I create an s-expression that I believe will solve my problem at hand. Once I'm satisfied with my s-expression, I send that s-expression to the REPL for immediate evaluation. The result of sending an s-expression can either be a value that I manually inspect, or it can be a change to a running application. Either way, I'll look at the result, determine if the problem is solved, and repeat the process of crafting an s-expression, sending it to the REPL, and evaluating the result.If that isn't clear, hopefully the video below demonstrates what I'm talking about.

If you're unfamiliar with RDD, the previous video might leave you wondering: What's so impressive about RDD? To answer that question, I think it's worth making explicit what the video is: an example of a running application that needs to change, a change taking place, and verification that the application runs as desired. The video demonstrates change and verification; what makes RDD so effective to me is what's missing: (a) restarting the application, (b) running something other than the application to verify behavior, and (c) moving out of the source to execute arbitrary code. Eliminating those 3 steps allows me to focus on what's important, writing and running code that will be executed in production.

Feedback

I've found that, while writing software, getting feedback is the single largest time thief. Specifically, there are two types of feedback that I want to get as quickly as possible: (1) Is my application doing what I believe it is? (2) What does this arbitrary code return when executed? I believe the above video demonstrates how RDD can significantly reduce the time needed to answer both of those questions.In my career I've spent significant time writing applications in C#, Ruby, & Java. While working in C# and Java, if I wanted to make and verify (in the application) any non-trivial change to an application, I would need to stop the application, rebuild/recompile, & restart the application. I found the slowness of this feedback loop to be unacceptable, and wholeheartedly embraced tools such as NUnit and JUnit.

I've never been as enamored with TDD as some of my peers; regardless, I absolutely endorsed it. The Design aspect of TDD was never that enticing to me, but tests did allow me to get feedback at a significantly superior pace. Tests also provide another benefit while working with C# & Java: They're the poorest man's REPL. Need to execute some arbitrary code? Write a test, that you know you're going to immediately delete, and execute away. Of course, tests have other pros and cons. At this moment I'm limiting my discussion around tests to the context of rapid feedback, but I'll address TDD & RDD later in this entry.

Ruby provided a more effective workflow (technically, Rails provided a more effective workflow). Rails applications I worked on were similar to my RDD experience: I was able to make changes to a running application, refresh a webpage and see the result of the new behavior. Ruby also provided a REPL, but I always ran the REPL external to my editor (I knew of no other option). This workflow was the closest, in terms of efficiency, that I've ever felt to what I have with RDD; however, there are some minor differences that do add up to an inferior experience: (a) having to switch out of a source file to execute arbitrary code is an unnecessary nuisance and (b) refreshing a webpage destroys any client side state that you've built up. I have no idea if Ruby now has editor & repl integration, if it does, then it's likely on par with the experience I have now.

Semantics

I like Simon's description, but I don't believe that we need to break things down to two different TLAs. Quite simply, (sadly) I don't think enough people are developing in this way, and the additional specification causes a bit of confusion among people who aren't familiar with RDD. However, Simon's description is so spot on I felt the need to describe why I'm choosing to ignore his classifications.-- Simon Katz

- It's important to distinguish between two meanings of "REPL" - one is a window that you type forms into for immediate evaluation; the other is the process that sits behind it and which you can interact with from not only REPL windows but also from editor windows, debugger windows, the program's user interface, etc.

- It's important to distinguish between REPL-based development and REPL-driven development:

- REPL-based development doesn't impose an order on what you do. It can be used with TDD or without TDD. It can be used with top-down, bottom-up, outside-in and inside-out approaches, and mixtures of them.

- REPL-driven development seems to be about "noodling in the REPL window" and later moving things across to editor buffers (and so source files) as and when you are happy with things. I think it's fair to say that this is REPL-based development using a series of mini-spikes. I think people are using this with a bottom-up approach, but I suspect it can be used with other approaches too.

RDD & TDD

RDD and TDD are not in direct conflict with each other. As Simon notes above, you can do TDD backed by a REPL. Many popular testing frameworks have editor specific libraries that provide immediate feedback through REPL interaction.When working on a feature, the short term goal is to have it working in the application as fast as possible. Arbitrary execution, live changes, and only writing what you need are 3 things that can help you complete that short term goal as fast as possible. The video above is the best example I have of how you go from a feature request to software that does what you want in the smallest amount of time. In the video, I only leave the buffer to verify that the application works as intended. If the short term goal was the only goal, RDD without writing tests would likely be the solution. However, we all know that that are many other goals in software. Good design is obviously important. If you think tests give you better design, then you should probably mix both TDD & RDD. Preventing regression is also important, and that can be accomplished by writing tests after you have a working feature that you're satisfied with. Regression tests are great for giving confidence that a feature works as intended and will continue to in the future.

REPL Driven Development doesn't need to replace your current workflow, it can also be used to extend your existing TDD workflow.